- Neural networks (Computer science) -- Algorithms,

- Machine learning,

- Cooperating objects (Computer systems),

- Human-computer interaction

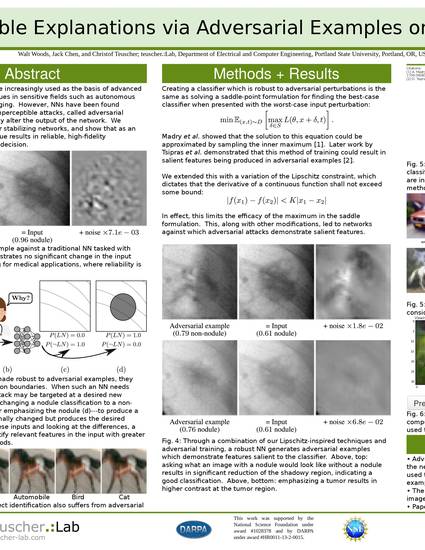

Neural Networks (NNs) are increasingly used as the basis of advanced machine learning techniques in sensitive fields such as autonomous vehicles and medical imaging. However, NNs have been found vulnerable to a class of imperceptible attacks, called adversarial examples, which arbitrarily alter the output of the network. To close the schism between needing reliability in real-world applications and the fragility of NNs, we propose a new method for stabilizing networks, and show that as an added bonus, our technique results in reliable, high-fidelity explanations for the NN's decision. Compared to the state-of-the-art, this technique increased the area under the curve of accuracy versus root-mean-squared error of allowed attacks by a factor of 1.8x, and we demonstrate that it allows for new Human-In-The-Loop (HITL) training techniques for NNs. On medical imaging, we show that our technique results in explanations which are significantly more sensible to a human operator than the explanations from previously proposed algorithms. The combination of increased network robustness and the ability to demonstrate decision boundaries to a human observer should pave the way for greatly improved HITL decision processes in future work.

© Copyright the author(s)

IN COPYRIGHT:

http://rightsstatements.org/vocab/InC/1.0/

This Item is protected by copyright and/or related rights. You are free to use this Item in any way that is permitted by the copyright and related rights legislation that applies to your use. For other uses you need to obtain permission from the rights-holder(s).

DISCLAIMER:

The purpose of this statement is to help the public understand how this Item may be used. When there is a (non-standard) License or contract that governs re-use of the associated Item, this statement only summarizes the effects of some of its terms. It is not a License, and should not be used to license your Work. To license your own Work, use a License offered at https://creativecommons.org/

Available at: http://works.bepress.com/christof-teuscher/39/